Max for Live Introduction

When the developers of Cycling 74 met with both Robert Henke and Gerhard Behles from Ableton Live, Max for Live was soon formed. This programme allowed for the development of devices such as synthesisers, drum machines, audio effects, MIDI effects and the implementation of live video into the music creation and performance software using Max MSP. Max for Live allows Max MSP to be used to create MIDI effects (which processes MIDI data), audio effects (for processing audio) and for the development of instruments (takes MIDI performance data and transforms it into audio).

These devices can be created in Ableton Live for real time processing (ability to hear instruments as you develop them). With the series of specific Max for Live objects available (which all begin with live.), MIDI mapping parameters of a created device to a hardware MIDI controller is achievable.

Some of the objects include:

Live.dial: Circular slider or knob which outputs numbers according to its degree of rotation.

Live.gain: Decibel volume slider or monitor

Live.slider: Output numbers by moving a slider on screen

Live.toggle: Creates a toggle switch that outputs 0 when turned off and 1 when turned on.

By pressing command and m, these live objects can be mapped to any recognised MIDI controller, a GUI (Graphical User Interface) can be designed within the Max for live vertical limit to create both ease of use and accessibility for the user.

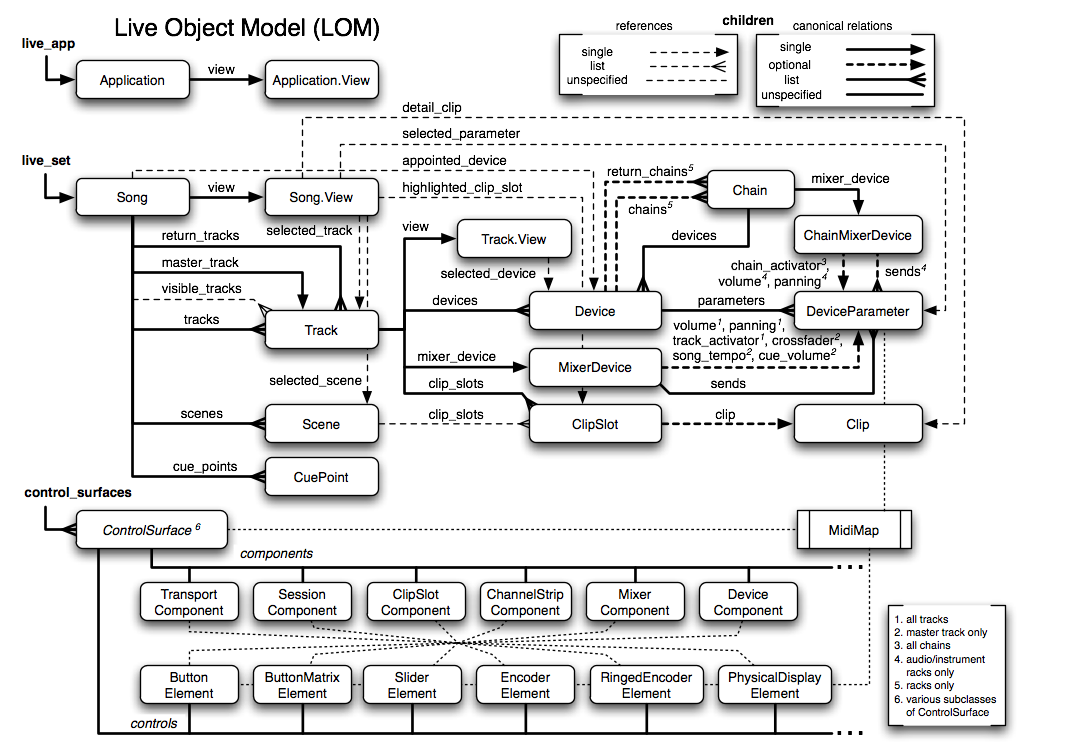

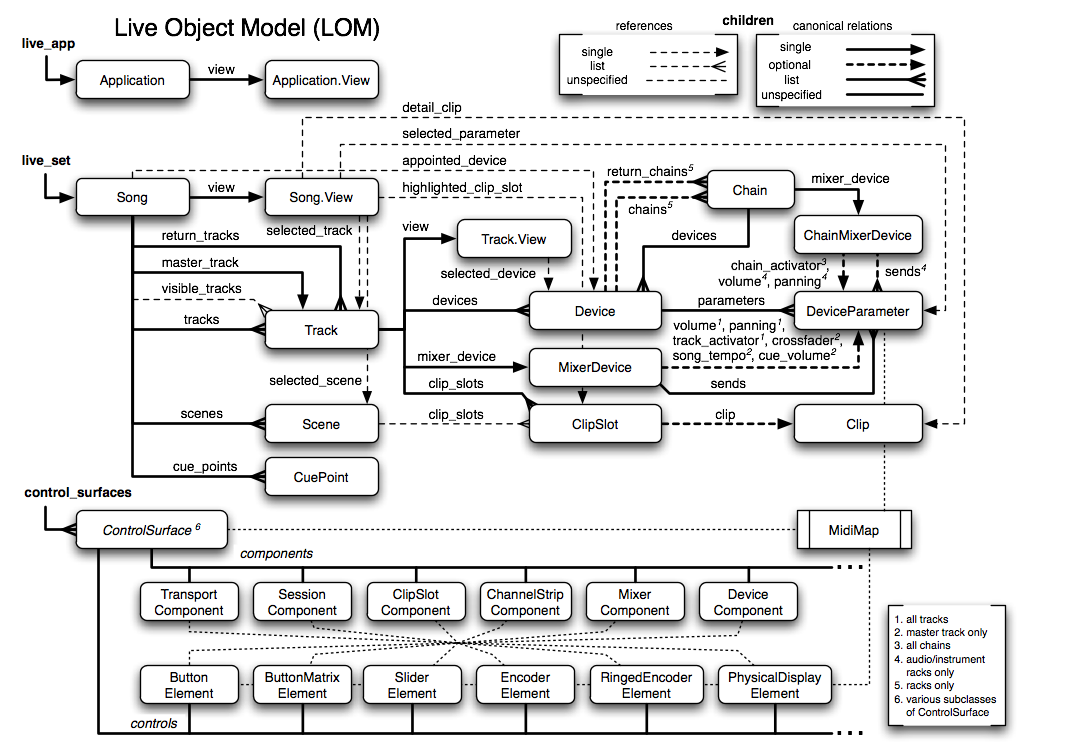

Max for Live works through access of the live object model, this map is a guide to everything accessible within Ableton Live. Not all parameters are available in the music software’s Application Program Interface (API), the live object model shows what max for live has access to.

Creating a Max for Live Jitter Patch:

Below I am going to briefly demonstrate how to create a basic Max for Live Jitter patch , I recommend you right click on each object used and select “reference” to read up on them more, it is one of the best ways to learn Max in my opinion. The download link for the created patch is below. The idea of this post is to demonstrate to people new to Jitter on how to create a basic audio reactive patch to work within Ableton live , I have added comments in the patch to try and explain on how it works.

To create a Max for Live device we first open up Ableton Live. Select Max for Live and drag in an empty Max for Live audio effect into the master channel.

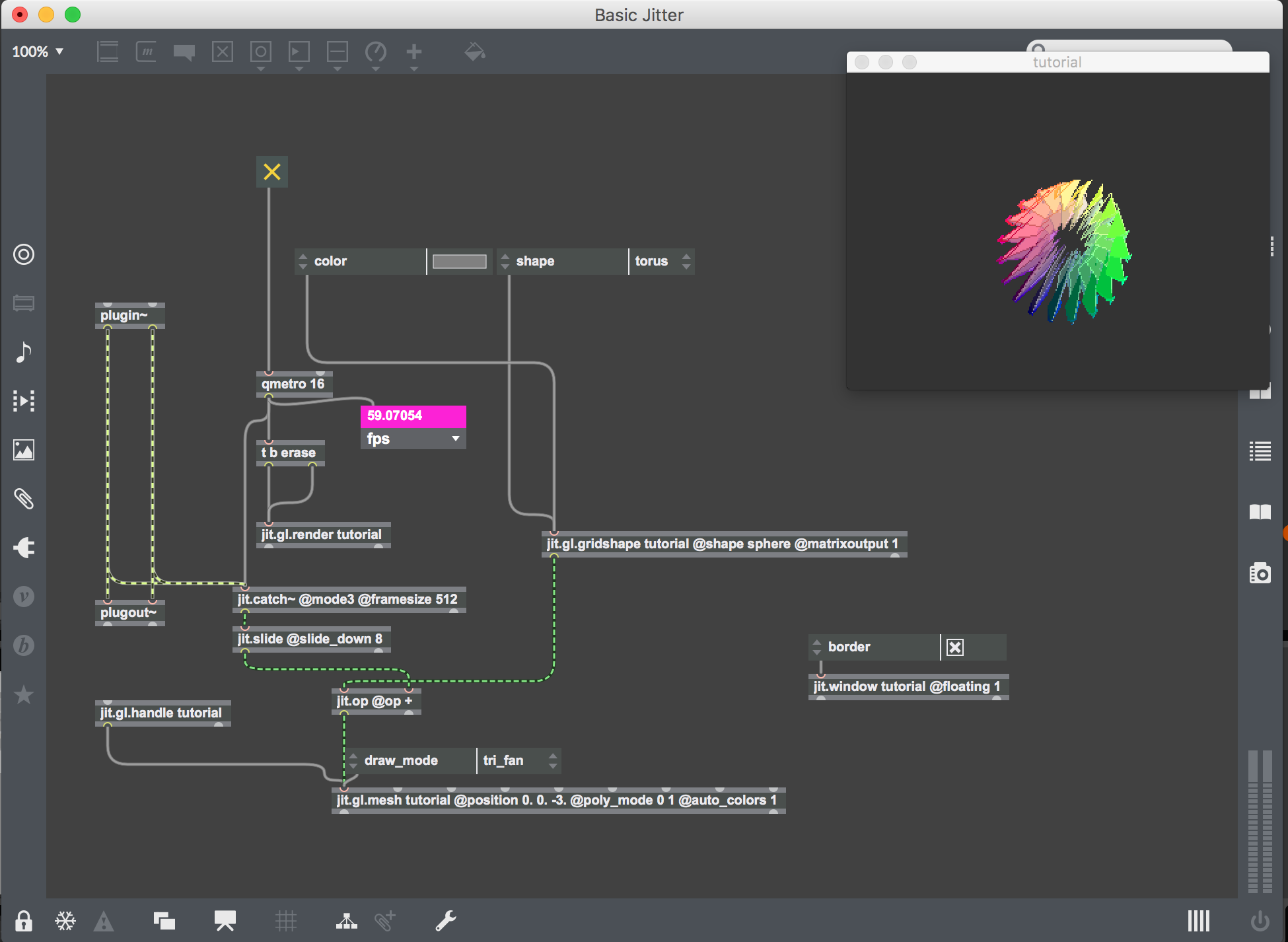

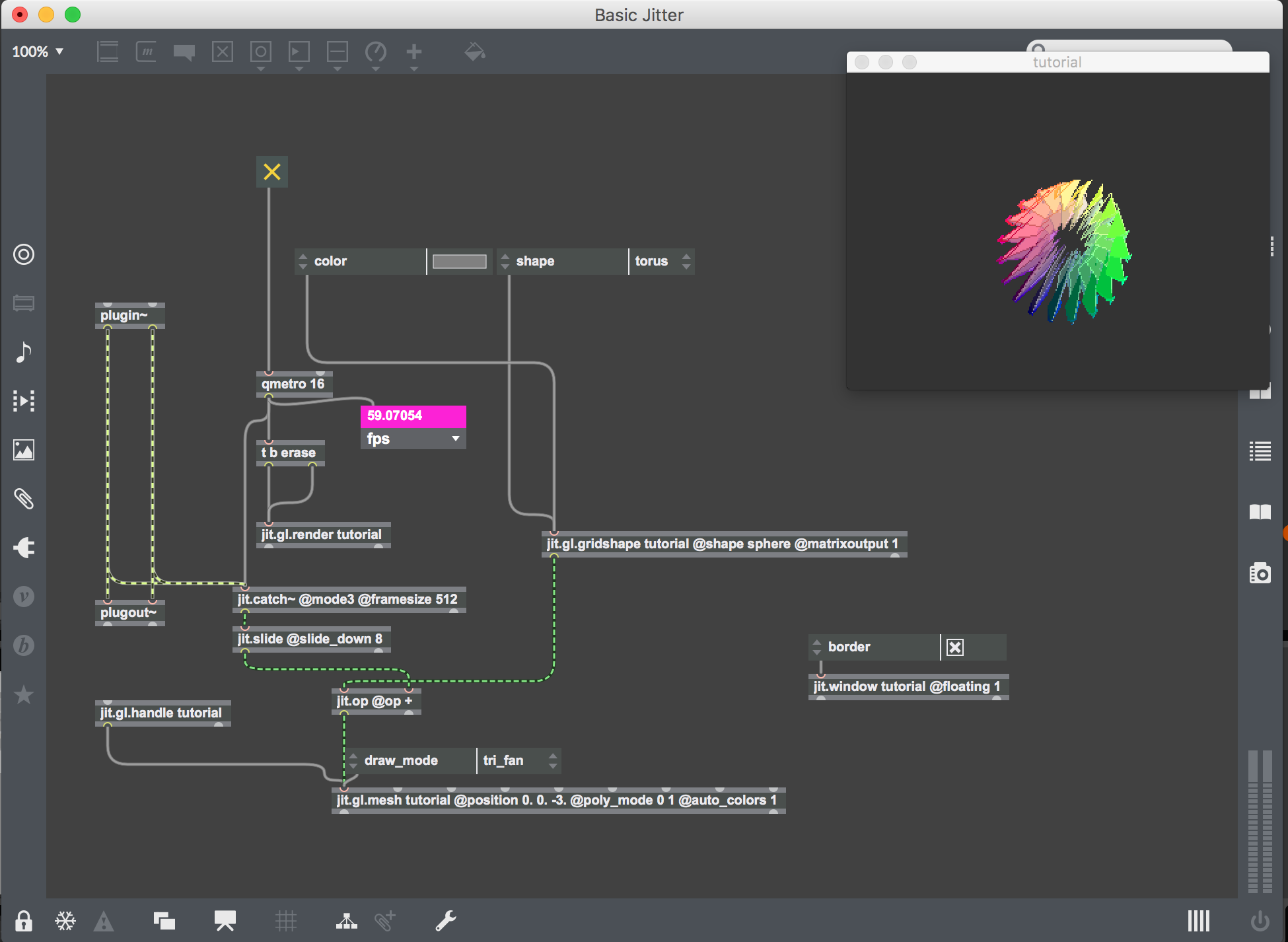

This creates an empty audio device patch with just the plugin~ and plugout~ objects. These represent the audio coming from Ableton Live and the audio being sent to the audio output device.

When creating an audio effect, signal (which is indicated by the green and white patch chords) from Ableton is routed through a created effect and then sent to the left and right outputs.

For a Jitter patch, a copy of the audio signal is taken for audio analysis while leaving the plugin~ and plugout~ objects intact. This means that the audio will play as normal while also being sent to the relevant Jitter object for audio analysis.

Drag in a track of your choice to the same channel, which will be used for audio analysis for the creation of the jitter patch.

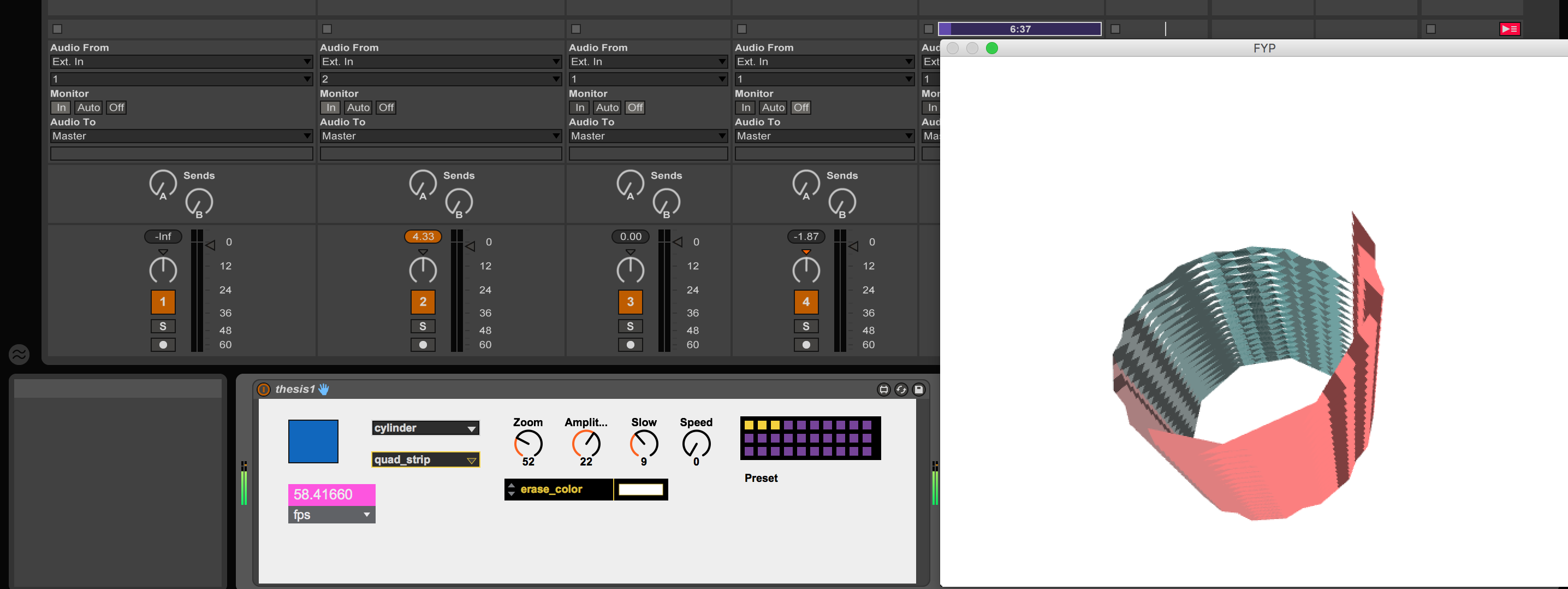

The Qmetro object bangs out frames per second, this is activated using the toggle and once it is selected the Qmetro starts the video. The Qmetro object has low priority properties, compared with the Metro object which triggers a bang at the set interval at all times. Meaning it will slow down in triggering bangs depending on current CPU usage, resulting in a lower frame rate. To view the frame rate , the jit.fpsgui object is used. This is attached to the jit.gl.render object.

To allow of the drawing and rendering of OpenGL, the jit.gl.render object is needed. This renders OpenGL objects to the destination window.

For a video to be rendered successfully , a message is required to allow for the erasing and drawing of frames. A trigger bang erase message is used , this first erases the existing frame , then receives a bang from Qmetro releasing a new frame and the trigger to draw the next frame, this process is then repeated.

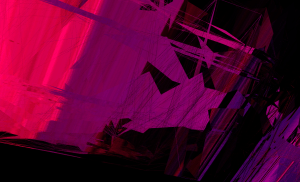

By leaving out this message will result in the image being constantly drawn over and over again on the same frame.

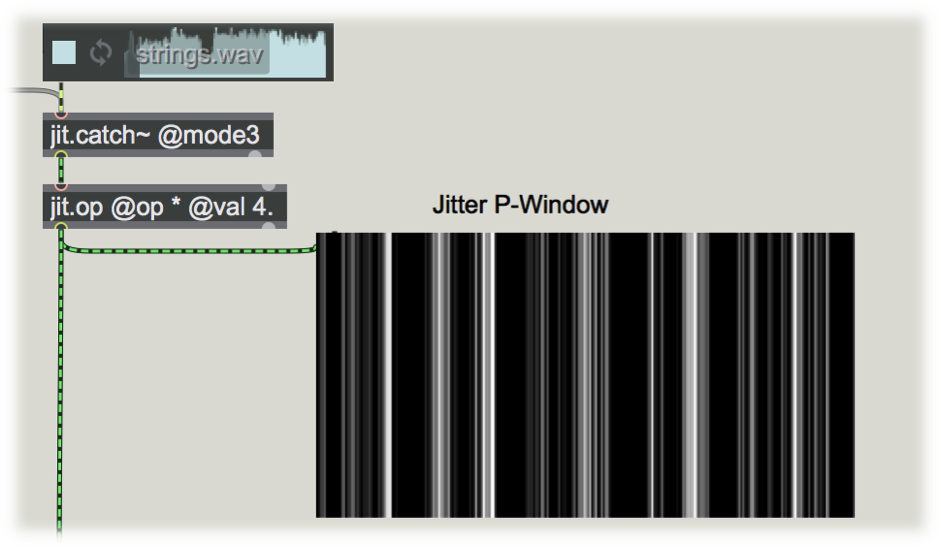

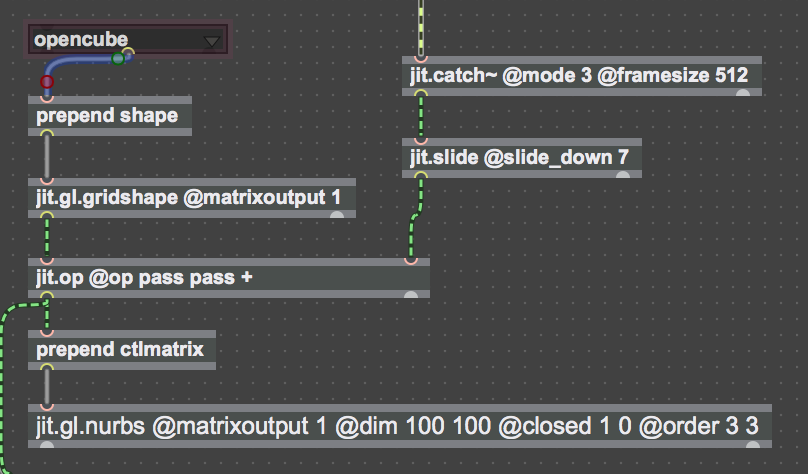

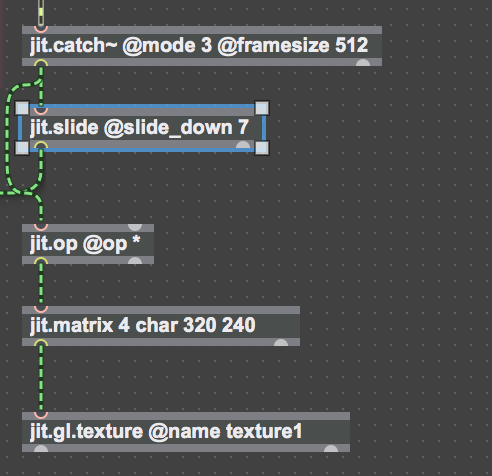

To analyse the audio , the jit.catch~ object is used which transforms audio into matrices. These matrices can be seen by connecting the jit.pwindow to the outlet of the object.

The next stage is to create a shape , to do this adds the jit.gl.gridshape object. This creates defined shapes such as sphere, torus , cube , plane , circle and others.

The jit.op object is added , this object is used to add the matrices from the jit.catch~ object to the gridshape. The @ symbols represent attributes for an object , in the case of jit.gl.gridshape, @Shape Sphere is added , this will automatically draw a sphere shape once the main toggle switch is pressed .

To add a menu of an attribute , click on the left hand side of the object (in this case the jit.gl.gridshape object) select shape , this will add a scrollable menu , allowing you to change to different pre determined shapes. This object is then attached to the jit.gl.mesh object, through changing attributes give you the ability to have different draw modes such as polygon, line, point, triangle, quads and line loop. These determine how the shape will be drawn. The @auto_colors attribute is added to give an array of colour onto the gridshape.

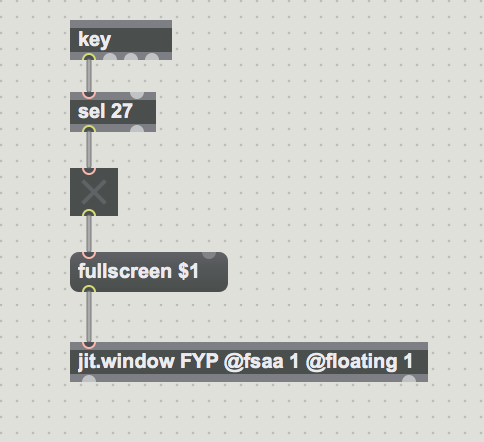

The jit.window object is added , this will create a floating screen where your visuals will be rendered.

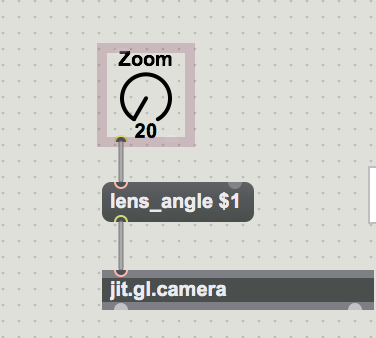

The jit.gl.handle object is added, this allows you to move the gridshape with your mouse, it can be rotated, zoomed in and out (hold alt and click mouse), or positioned on screen (hold Cmd and click mouse)

Finished Max for Live Patch

Finished Max for Live Patch

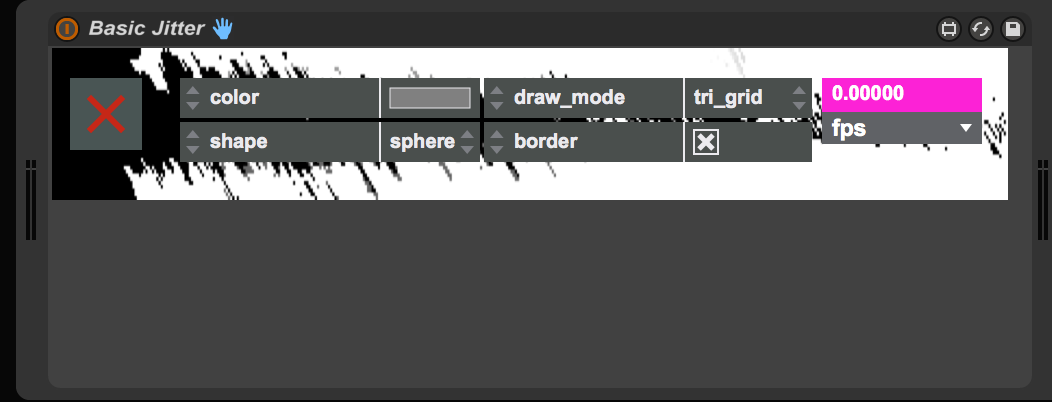

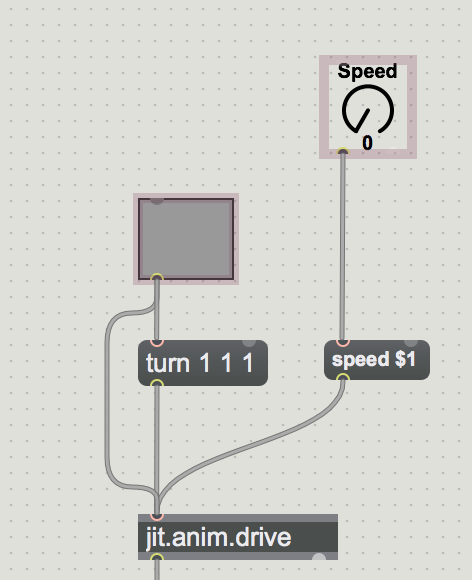

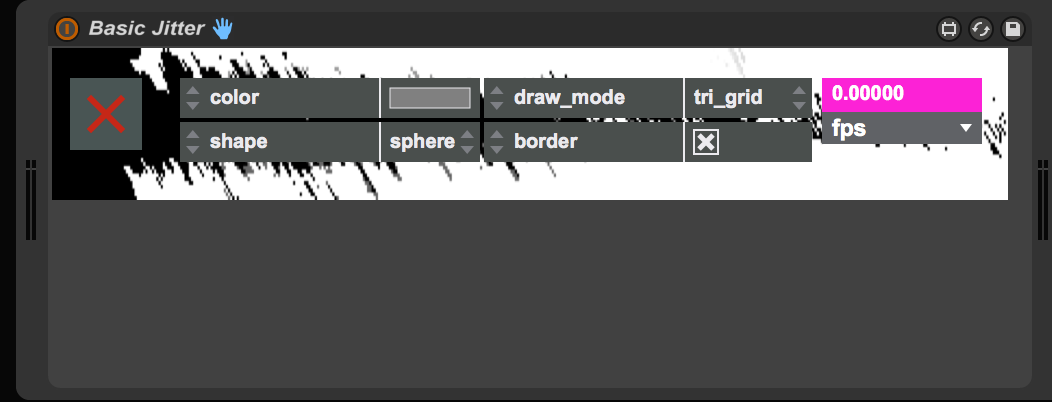

In Max there is both a patching mode and presentation mode, the latter being used to create the graphical user interface in Ableton Live. Which an example of can be seen below.

To add an object to presentation mode , just right click and select “Add To Presentation Mode” , when all relevant objects are selected then press “Alt + Cmd+E” or press the yellow highlighted button in the screenshot below, this will switch between Patching Mode and Presentation Mode.

To add an object to presentation mode , just right click and select “Add To Presentation Mode” , when all relevant objects are selected then press “Alt + Cmd+E” or press the yellow highlighted button in the screenshot below, this will switch between Patching Mode and Presentation Mode.

When in Presentation Mode , all relevant objects can be positioned above the vertical device limit. The device can be downloaded here . Press Cmd+E to unlock the patch and switch to patching mode to view this basic patch.

If you have any questions , feel free to leave a comment ,thank you for reading.

I should have another post up soon enough , please follow if interested.

Finished Max for Live Patch

Finished Max for Live Patch To add an object to presentation mode , just right click and select “Add To Presentation Mode” , when all relevant objects are selected then press “Alt + Cmd+E” or press the yellow highlighted button in the screenshot below, this will switch between Patching Mode and Presentation Mode.

To add an object to presentation mode , just right click and select “Add To Presentation Mode” , when all relevant objects are selected then press “Alt + Cmd+E” or press the yellow highlighted button in the screenshot below, this will switch between Patching Mode and Presentation Mode.